In the age of sail, naval combat was “muzzle to muzzle”. Before 1800 most such actions took place at ranges between 60 and 150 feet (18 – 46 m).

The Civil War Battle of Cherbourg in 1864 pitting the Mohican-class sloop-of-war USS Kearsarge against the Confederate commerce raider CSS Alabama, opened at 3,000 feet (910m).

In 1884 the invention of the steam turbine produced speeds in naval vessels, never before dreamed of. By the turn of the 20th century, rifled guns of vastly larger size hurled explosive ammunition over the horizon. Enormously complex fire control solutions had to be calculated for range, movement of both vessels, elevation, the yaw of the firing ship, meteorological conditions, even the ambient temperature in powder magazines.

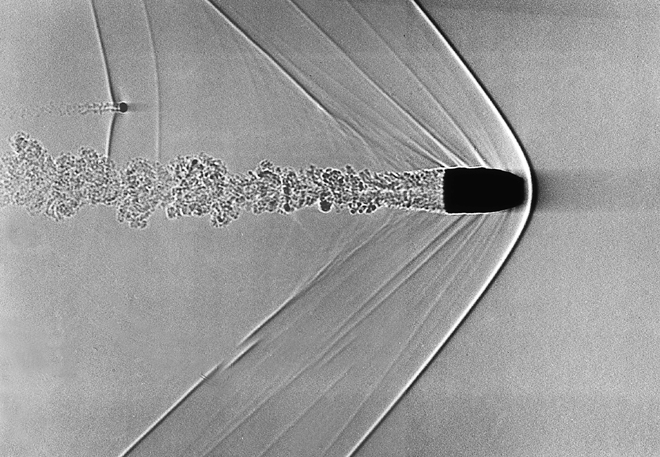

The projectile in flight is subject to forces such as gravity, drag, wind and air pressure and, at longer ranges, even latitude and rotation of the planet. Any given salvo may be accurately fired at a moving target only to fall harmlessly, several ship lengths behind. With the other guy shooting back, there isn’t always another chance to get it right.

On land, artillery fire control solutions are nearly as complex and all of it, pertains only to a single gun. What is to be done then, about training all the guns on a warship, against a single target. What about a whole fleet?

Over time, increasingly accurate solutions were devised but, by World War 2, the race for fire control supremacy had outstripped the old ways. The penalty for failure was the difference, between life and death.

We are surrounded today by computing horsepower, undreamed of by any but the science fiction buffs of earlier generations. The 8088-processor powered IBM personal computer released 40 short years ago had eight times more memory than “Apollo’s brain”, the guidance computer navigating Apollo 11 to the moon and back, ten years earlier.

The iPhone 5s has 1,300 times the computing power, of the Apollo moon lander.

A wonder for its time, IBM PC processors could address up to 64k at a time, within the computer’s (max) 1 mb memory. The 80286 based PC/AT released three years later sported a 20mb internal hard drive. Today, 128 bucks at Walmart will get you 4 Gigabytes of memory and a 160 gig, hard drive.

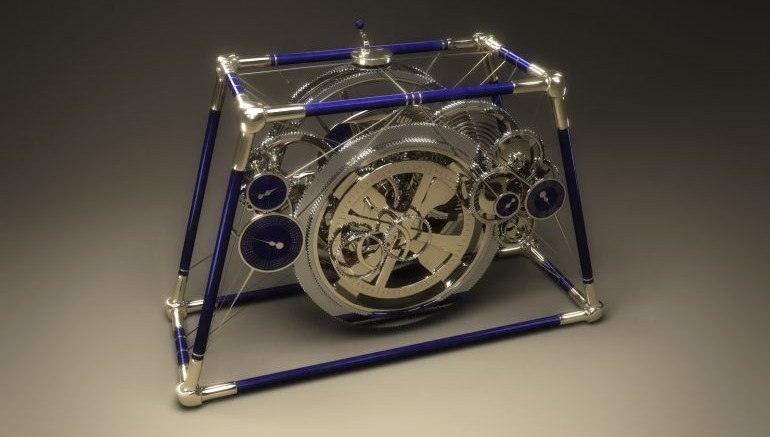

Back to artillery. The idea of a calculating machine was anything, but new. The abacus has been around for 3,000 years. The hand operated Antikythera analog computer dredged up from the ocean bottom in 1901, may go back as far as 205 BC. The 12th century “castle clock” invented by the Muslim polymath Ismail al-Jazari may be the world’s first programmable computer, capable of showing local time, lunar and solar orbits and even adjusting for length of day at certain times of the year.

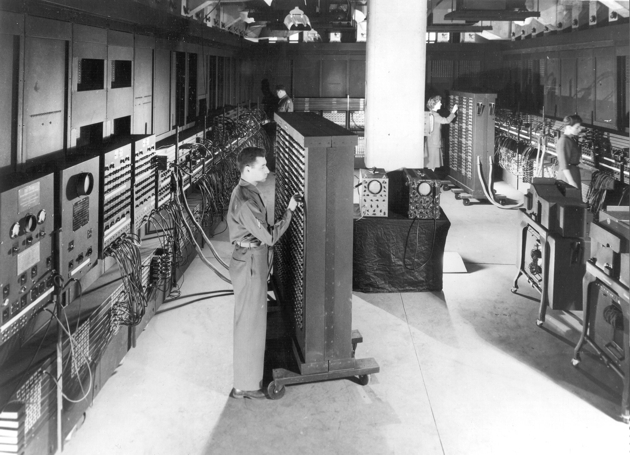

The US Army commissioned a study for a giant electronic “brain” to calculate firing tables back on May 31, 1943. Work began with Johns Hopkins physicist John Mauchly with chief engineer John Presper Eckert of the University of Pennsylvania’s Moore School of Electrical Engineering.

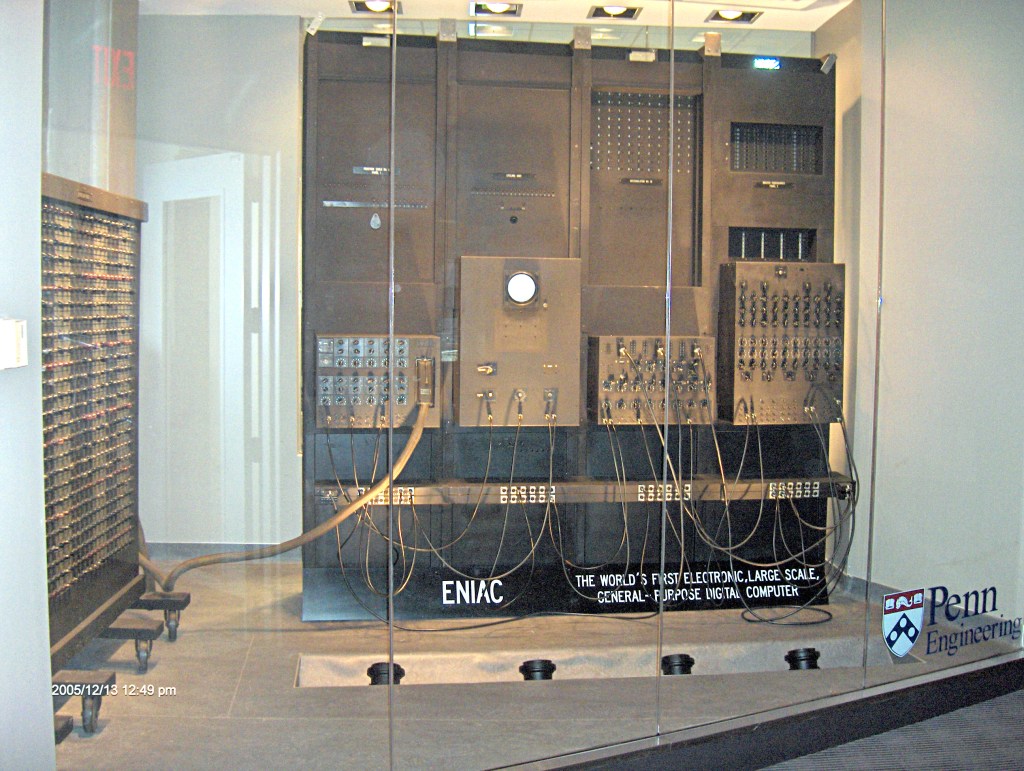

It took a year for the team to design the machine and another 18 months to build it. The Electronic Numerical Integrator and Computer (ENIAC) was officially powered up in November, 1945.

The one thing those ancient machines have in common, is they were all hardware. “Software”, as it was known to programmers of the 1940s, had instructions written directly into the machine, in binary code.

The war was over in December 1945 but the military still had work for ENIAC to do. The first real-world calculations were performed On December 10.

ENIAC was formally dedicated at the University of Pennsylvania on February 15, 1946. Risible though the machine may be by modern standards, ENIAC was a wonder of science and technology, for its time. The press dubbed the thing, a “Giant Brain”. A trajectory taking 20 hours to calculate by humans took 30 seconds. One ENIAC was the computational equal, of 2,400 humans.

What the press didn’t know, was behind the scenes. In the early days of the war, the Moore School of Engineering worked with the Ballistic Research Laboratory (BRL) where a team of 100 “human computers” were trained to hand-calculate firing tables for artillery shells. With so many men off to war and programming seen at that time as “clerical work” the BRL hired, mostly women.

These were the “Top Secret Rosies”, the female “computers”, of WW2. When the ENIAC project began six of them came over, as programmers.

Projects involved design for the hydrogen bomb, weather predictions, cosmic-ray studies, thermal ignition, random-number studies and wind-tunnel design.

ENIAC began as a room-sized modular computer comprised of individual panels, to perform different functions. Numbers were sent back & forth on buses, called trays. At its height ENIAC had 18,000 vacuum tubes, 7,200 crystal diodes, 1,500 relays, 70,000 resistors, 10,000 capacitors and something like 5 million hand soldered joints occupying 1,800 square feet. The machine consumed 150 kilowatts of electricity. Rumor had it when ENIAC was switched on the lights in all Philadelphia, dimmed.

All things must come to an end. ENIAC, once a wonder of science and technology was already obsolete, by 1956. At its height, the machine weighed in at 25 tons and performed 5,000 calculations, per second. Weighing in at 4.55 ounces the iPhone 6, performs 25 Billion calculations per second.

Today the electronic descendants of ENIAC perform tasks of increasing, even mind boggling complexity. Mapping the human genome. Climate research. Exploration, for oil and gas.

Before long top-of-the line mainframe computers were performing at a rate not in the thousands of instructions per second but MIPS. Millions of instructions per second. The first supercomputer arrived in 1965 with so much horsepower as to require a whole new unit of measure: FLOPS “floating-point operations per second”.

The term wasn’t in use during ENIAC’s day but, if it was, that bad boy was chunkin’ along, at 500 FLOPS. Supercomputer performance metrics have since climbed the metric decadic system, bending vocabularies to new and hitherto unimagined heights. KiloFLOPS was eclipsed by megaFLOPS and gigaFLOPS and continued ever onward. The “tera” prefix (Trillion) gave way to the dizzying petaFLOP, or one one quadrillion: a thousand trillion floating point line operations, per second.

In April 2020 the distributed computing network folding@home acheived computing performance of one exaFLOPS. Unless you’re in interplanetary space I can’t think of another use, for such a number. Unless we’re talking about the federal debt.

As of January 2021 no single machine has scaled such heights, but they’re working on it. One exaFLOPS. A quintillion floating point line operations, per second. The estimated speed at the neural level, of the human brain.

You must be logged in to post a comment.