Most of us experience the ocean as a day at the beach, a boat ride, or a moment spent on one end of a fishing line.

The global ocean divided into five major basins: the Pacific, Atlantic, Indian, Southern, and Arctic. Covering 70 percent and more of the planet and taken together, the oceans contain 97% of all the water on planet earth.

And yet the exploration of all that water has never added up to more than 20 percent.

For most dive organizations, the recommended maximum for novice divers is 20 meters (65 feet). A weird form of intoxication sets in called nitrogen narcosis, around 30 meters (98 feet). Divers have been known to remove their own mouthpiece and offer it to fish, with tragic if not predictable results. Dives beyond 130 feet enter the world of “technical” diving involving specialized training, sophisticated gas mixtures and extended decompression timetables. The oxygen we rely on for life literally becomes toxic, around 190 feet.

On September 17, 1947, French Navy diver Maurice Fargues attempted a new depth record, off the coast of Toulon. Descending down a weighted line, Fargues signed his name on slates placed at ten meter intervals. At the three minute mark, the line showed no sign of movement. The diver was ordered pulled up. Maurice Fargues, a diver so accomplished he had literally saved the life of Jacques Cousteau only a year earlier, was the first diver to die using an aqualung. His final signature on the last tablet, at 390 feet.

The man had barely scratched the surface.

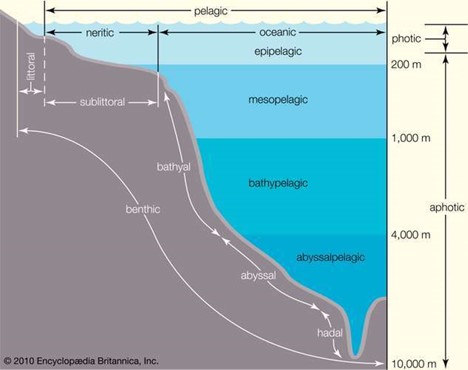

For oceanographers, all that water is divided into slices. The top or epiplagic Zone descends from 50 to 656 feet, depending on clarity of the water. Here, phytoplankton convert sunlight to energy forming the first step in a food chain, supporting some 90 percent of all life in the oceans. 95 percent of all photosynthesis in the oceans occur in the epiplagic or photic zone.

The mesopelagic or “twilight zone” receives a scant 1% of all sunlight. Temperatures descend as salinity increases while the weight of all that water above, increases. Beyond that, lies the abyss.

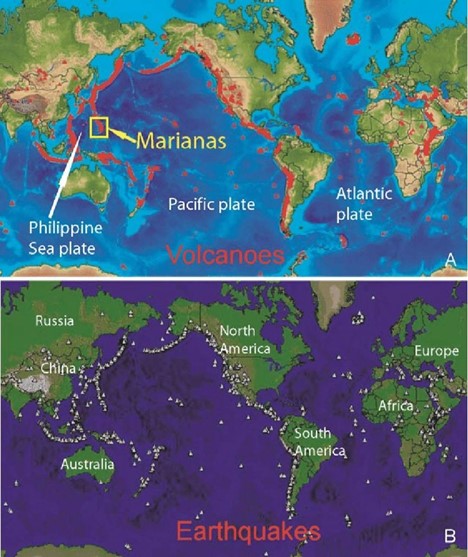

Far below that the earth’s mantle is quite elastic, broken into seven or eight major pieces and several minor bits called Tectonic Plates. Over millions of years these plates move apart along constructive boundaries, where oceanic plates form mid-oceanic ridges. The longest mountain range in the world as an example, runs roughly down the center of the Atlantic ocean.

The Atlantic basin features deep trenches as well, sites of tectonic fracture and divergence. Far deeper than those are the Pacific subduction zones where forces equal and opposite to those forming the mid-Atlantic ridge, collide. One plate moves under another and down into the mantle forming deep oceanic ridges, the deepest of which is the Mariana Trench, near Guam. The average depth is 36,037, ± 82 feet, dropping off to a maximum depth of 35,856 feet to a small valley at the south end of the trench, called Challenger Deep.

If you could somehow pull up Mt. Everest by the roots and sink the thing in Challenger Deep, (this is the largest mountain on the planet we’re talking about), you would still have swim down 1.2 miles, to get to the summit.

The air around us is a liquid with a ‘weight’ or barometric pressure at sea level, of 14.696 pounds per square inch. It’s pressing down on you right now but you don’t feel it, because your internal fluid pressures, push back. A column of salt water exerts the same pressure at 10 meters, or 33 feet.

To consider the crushing weight of all that water, consider this. The bite force of an American Grizzly is 1,200 psi. The Nile Crocodile, 5,000. The pressure in Challenger Deep is equal to 1,150 atmospheres. Over 16,000 pounds per square inch.

The problems with reaching such a depth are enormous. WW2 era German submarines collapsed between 660 and 900 feet, with the loss of all hands. The modern American Sea Wolf class of nuclear submarine is said to have a crush depth, of 2,400 feet.

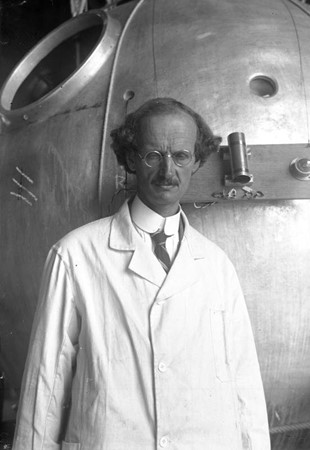

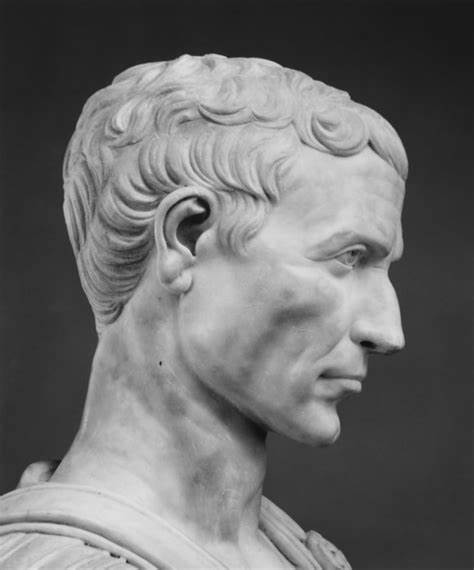

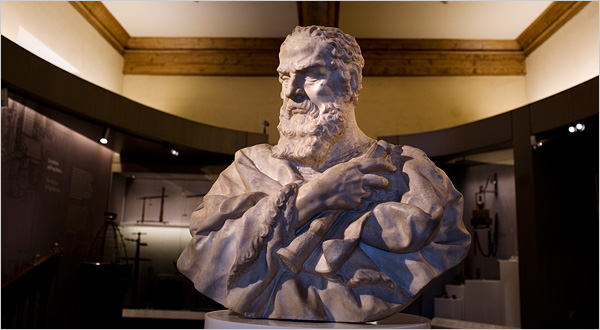

In the early 1930s, Swiss physicist, inventor and explorer Auguste Piccard experimented with high altitude balloons to explore the upper atmosphere.

The result was a spherical, pressurized aluminum gondola capable of ascending to great heights, without the use of a pressure suit.

Within a few years the man’s interests had shifted, to deep water exploration.

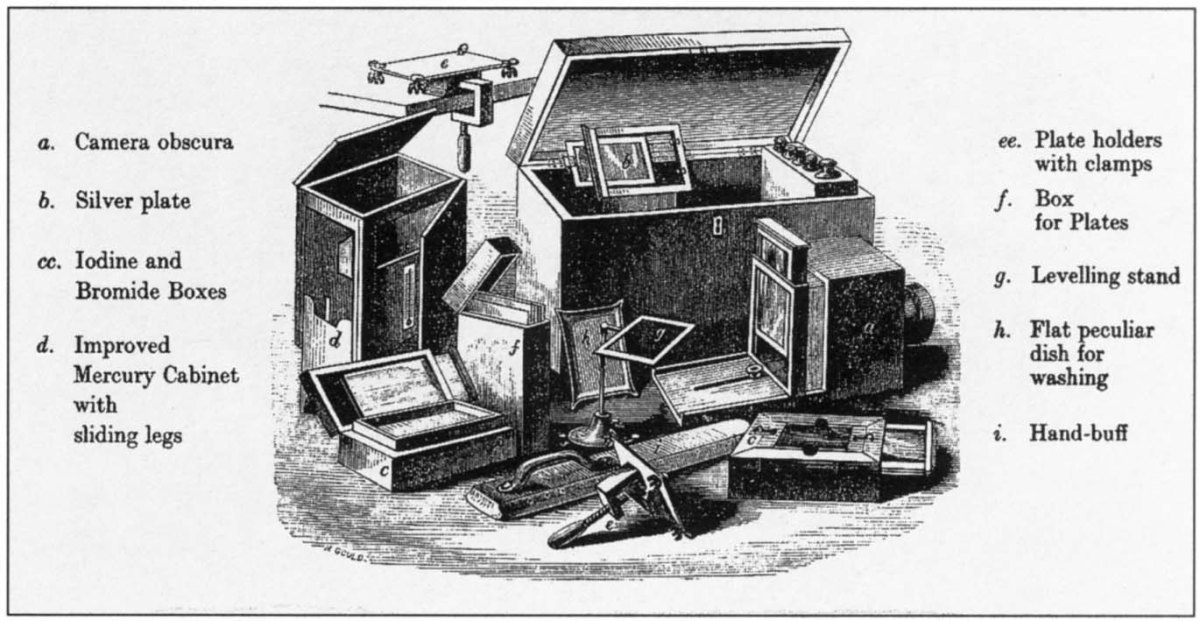

Knowing that air and water are both fluid environments, Piccard modified his high altitude cockpit into a steel gondola, for deep sea exploration. By 1937 he’d built his first bathyscaphe.

“A huge yellow balloon soared skyward, a few weeks ago, from Augsberg, Germany. Instead of a basket, it trailed an air-thin black-and-silver aluminum ball. Within [the contraption] Prof. Auguste Piccard, physicist, and Charles Kipfer aimed to explore the air 50,000 feet up. Seventeen hours later, after being given up for dead, they returned safely from an estimated height of more than 52,000 feet, almost ten miles, shattering every aircraft altitude record.” – Popular Science, August, 1931

Piccard’s work was interrupted by World War 2 but resumed, in 1945. He built a large steel tank and filled it with low-density non-compressible fluid, to maintain buoyancy. Gasoline, it turned out, would do nicely. Underneath was the capsule designed to accommodate one person at sea-level pressure while outside, PSI mounted into the thousands of atmospheres.

The craft, with modifications from the French Navy, achieved depths of 13,701 feet. In 1952, Piccard was invited to Trieste Italy, to begin work on an improved bathyscaphe. In 1953, Auguste and and his son Jacques brought the Trieste to 10,335 feet.

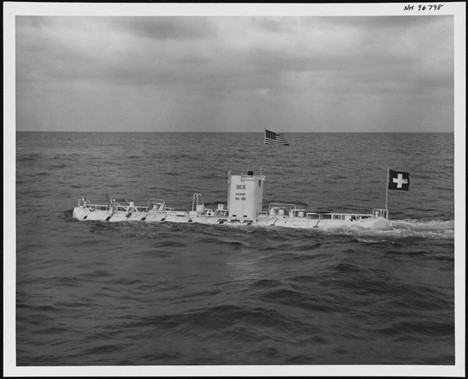

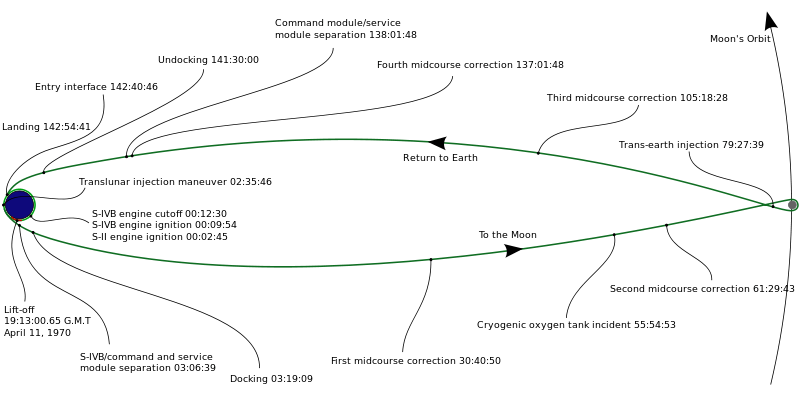

Designed to be free of tethers, Trieste was fitted with two electric motors of 2 HP each, capable of propelling the craft at a speed of 1.2mph over a few miles, and changing direction. After several years in the Mediterranean, the US Navy acquired Trieste in 1958. Project Nekton was proposed the same year, code name for a gondola upgrade involving three test dives and culminating in a descent to the greatest depth of the world’s oceans. The Challenger Deep.

Trieste received a larger gasoline float and bigger tubs with more iron ballast. With help from the Krupp Iron Works of Germany, she was fitted with a stronger sphere with five inches of solid steel and weighing in at 5 tons.

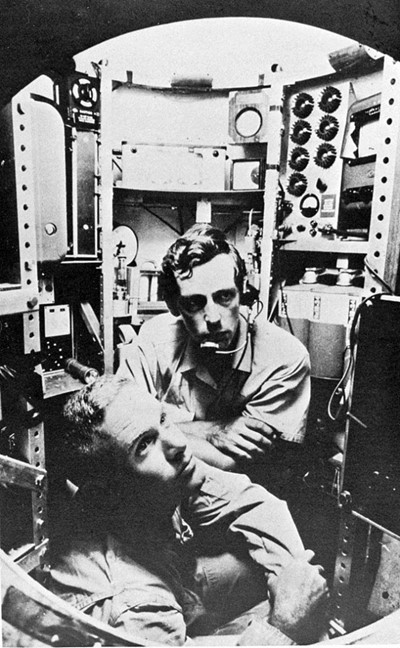

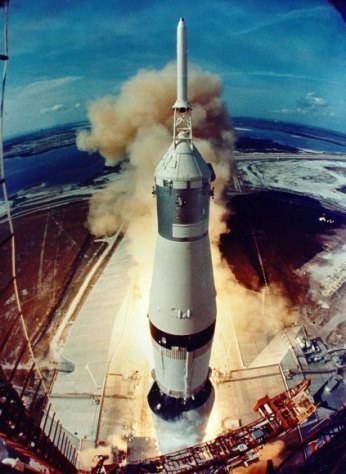

The cockpit was accessible, only by an upper hallway which was then filled with gasoline. The only way to exit was to pump out the gas and blow out the rest, with compressed air. On this day in 1960, US submarine commander Lieutenant Don Walsh joined Jacques Piccard in that hallway, climbing into the sphere and closing the hatch. The dive began at 0823.

Trieste stopped her descent several times, each one a new thermocline bringing with it a colder layer of water and neutral buoyancy, for the submersible. Walsh and Piccard discussed the problem and elected to gamble, ejecting some of that buoyant gasoline. By 650 feet those problems had come to an end.

By 1,500 feet, the darkness was complete. The pair decided to change their clothes, wet with spray from a stormy beginning. With a cockpit temperature of 40° Fahrenheit, it was a welcome change.

Looking out the plexiglass window, the depths between 2,200 feet and 20,000 seemed extraordinarily empty. At 14,000 feet the pair was now in uncharted territory. No one had ever been this deep. At 26,000 feet, descent was slowed to two feet per second. At 30,000 feet, one.

At 1256 Walsh and Piccard could see the bottom, on the viewfinder. 300 feet to go. Not knowing if the phone would work at this depth, Walsh picked up. “This is Trieste on the bottom, Challenger Deep. Six three zero zero fathoms. Over.” The response came back weak, but clear. “Everything O.K. Six three zero zero fathoms?” Walsh responded “This is Charley” (seaman-speak, for ‘OK’. “We will surface at 1700 hours”.

The feat was akin to the first flight into space. No human had ever reached such depths. Since then only five later expeditions have reached that remote and desolate spot. While unmanned submersibles have since visited Challenger Deep, Piccard and Walsh’ descent of January 23, 1960 was the first and last manned voyage, to the bottom of the world.

“After the 1960 expedition the Trieste was taken by the US Navy and used off the coast of San Diego, California for research purposes. In April 1963 it was taken to New London Connecticut to assist in finding the lost submarine USS Thresher. In August 1963 it found the Threshers remains 1,400 fathoms (2,560 meters) below the surface. Soon after this mission was completed the Trieste was retired and some of its components were used in building the new Trieste II. Trieste is now on display at the National Museum of the United States Navy at the Washington Navy Yard”.

H/T Forgotten History

As the Great Depression descended across the land, minor league clubs folded by the bushel basket. Small town owners were desperate to innovate. The first-ever night game in professional baseball was played on May 2, 1930, when Des Moines, Iowa hosted Wichita for a Western League game.

As the Great Depression descended across the land, minor league clubs folded by the bushel basket. Small town owners were desperate to innovate. The first-ever night game in professional baseball was played on May 2, 1930, when Des Moines, Iowa hosted Wichita for a Western League game.

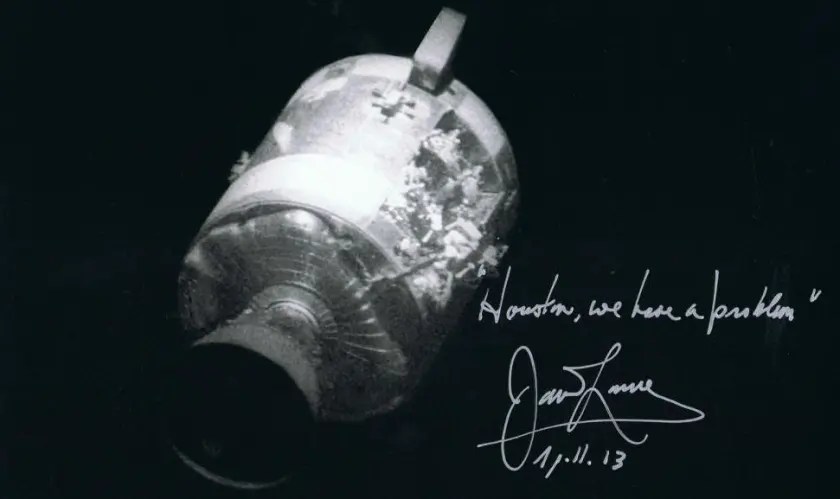

Fifteen years before Angus “Mac” MacGyver hit your television screen, mission control teams, spacecraft manufacturers and the crew itself worked around the clock to “MacGyver” life support, navigational and propulsion systems. For four days and nights, the three-man crew lived aboard the cramped, freezing Aquarius, a landing module intended to support a crew of 2 for only a day and one-half.

Fifteen years before Angus “Mac” MacGyver hit your television screen, mission control teams, spacecraft manufacturers and the crew itself worked around the clock to “MacGyver” life support, navigational and propulsion systems. For four days and nights, the three-man crew lived aboard the cramped, freezing Aquarius, a landing module intended to support a crew of 2 for only a day and one-half.

You must be logged in to post a comment.